Of the player whose move we want to generate. The response will terminate when any input is written to stdin. scoreLead - The predicted average number of points that the current side is., it represents the win rate percentage given between 0.0 and winrate - If specified as an integer, it represents the win rate percentage.visits - The number of visits invested in move so far.Where keyvalue is two non-whitespace strings joined by a space and vertex a

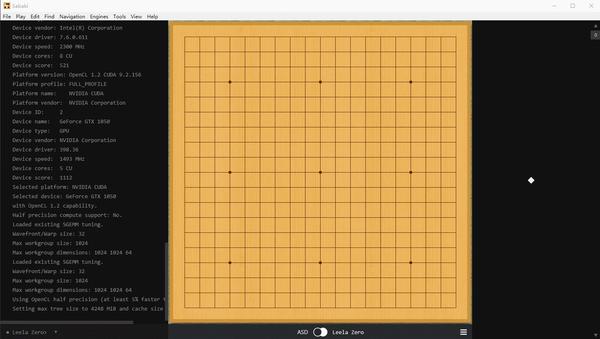

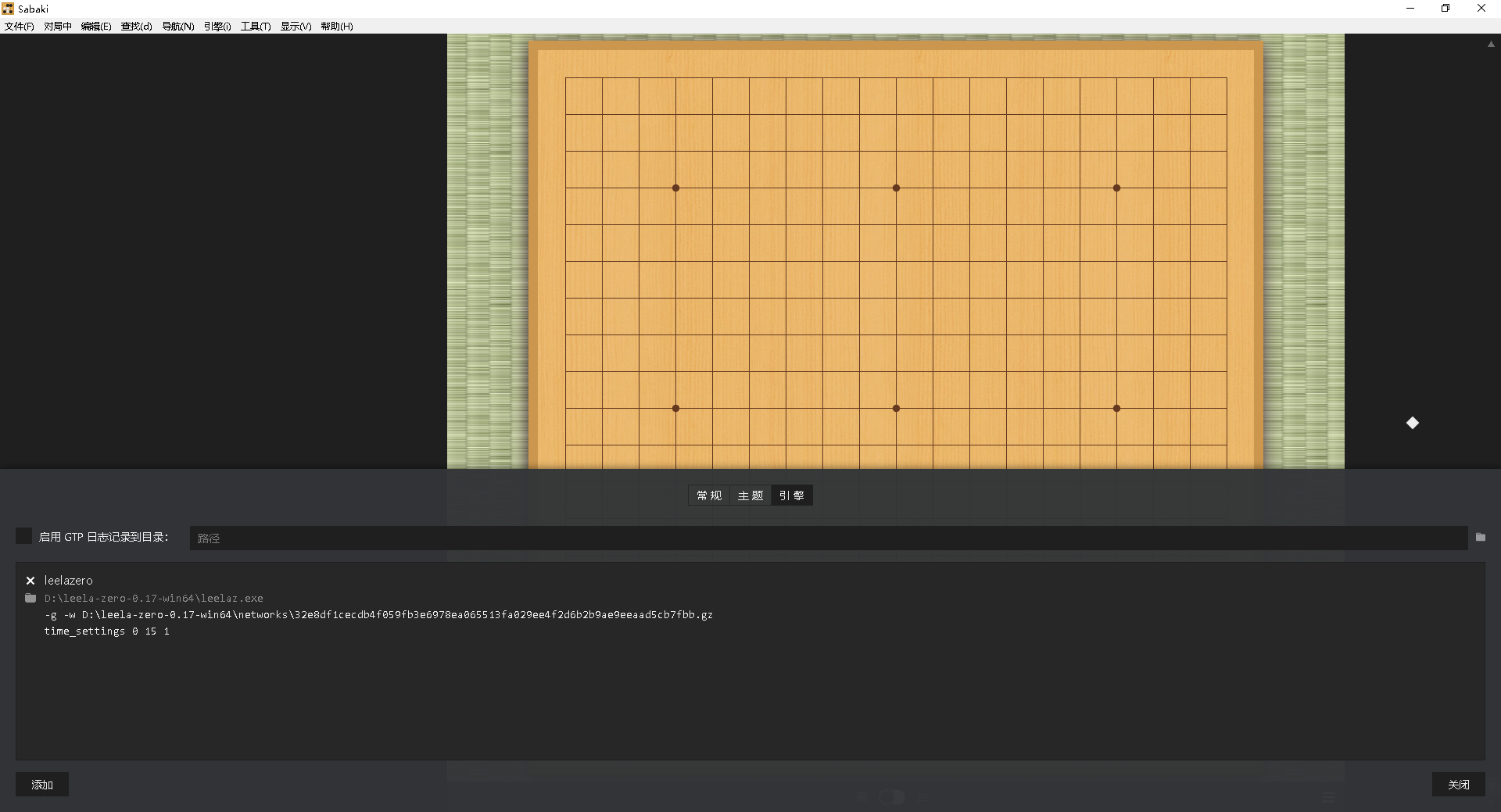

Agents could be wrapped in an HTTP server,įor instance, and connect against a web interface so humans can play their bots.Info move D4 visits 836 winrate 4656 prior 8 order 0 pv D4 Q16 D16 Q3 R5 info move D16 visits 856 winrate 4655 prior 8 order 1 pv D16 Q4 Q16 C4 E3 info move Q4 visits 828 winrate 4653 prior 8 order 2 pv Q4 D16 Q16 D3 C5 Building a demo with a user interface would be nice.Would be beneficial to get started and see reasonable results from the start. Running a larger experiment and storing the weights somewhere freely accessible to users.The basics are covered, but there are potentially many This should be refactored into an iterator that only provides you with the next batch Experience collectors build one large ND4J array, which won’t work for large experiments.ScalphaGoZero can be improved in many ways, here are a few examples: The simulation stores experience data and lets your agents learn from it, so they The last piece needed to run your own AlphaGo Zero is to create a simulation between two ZeroAgent That can be used for training the agents. When opponents play many games against each other, they generate game play data, or experience, Includes territory estimation and reporting game results. Who won and reinforce the signals leading to victory (and weaken those leading to defeat). To play actual games, agents need the ability to estimate scores at the end of a game to decide The right shape can be used within this framework. Each model that takes encoded states and outputs To start with, you might want to work with simpler models.

In AlphaGo Zero both of theseĬomponents are integrated into one deep neural network, with a so called policy and value head. To select a move, agents need machine learning models to predict the value of the current position (value function)Īnd how well a next move would probably work (policy function). For AlphaGo Zero you need a ZeroAgent,īut other agents with simpler methodology can also lead to decent results. AlphaGo Zero needs a specific ZeroEncoder,īut many other encoders are feasible and can be implemented by the user.Ī Go-playing agent knows how to play a game, by selecting the next move, and handles game state information Use for training and predictions, namely tensors. Game states and moves need to be translated into something a neural network can Is implemented in the Go board class to speed up computation.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed